2062: The World that AI Made

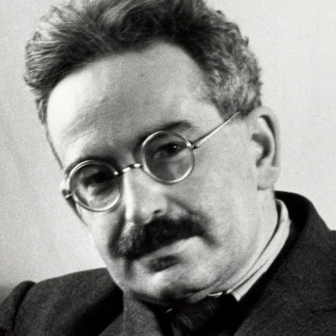

By Toby Walsh | Black Inc. | $34.99 | 336 pages

Made by Humans: The AI Condition

By Ellen Broad | Melbourne University Press | $29.99 | 277 pages

The Future of Everything: Big, Audacious Ideas for a Better World

By Tim Dunlop | NewSouth | $29.99 | 272 pages

Thinking about the implications of artificial intelligence, or AI, can be disorienting. On the one hand, we are surrounded by technological marvels: robot vacuum cleaners, watches that call the nearest hospital when we have a heart attack, machines that can outplay humans at just about any game of skill. On the other hand, many parts of life seem to be going backwards. Things we once took for granted, from the ABC to the weekend, have become “luxuries we can no longer afford.”

Seeming contradictions like these are not new. Technological change has always been uneven, making manufactured products cheaper, for instance, yet leaving many service activities largely unaffected. Increased productivity in the economy as a whole has pushed wages up, making labour-intensive services more expensive.

This divergence is much more marked with AI. Compared to earlier rounds of technological change, we are seeing a combination of incredibly rapid change and near stagnation. The acceleration of computing power has been so fast that a Series 1 Apple watch (itself a museum piece three years after its introduction) can perform calculations as fast as the Cray X-MP, the most powerful supercomputer in the world back in 1982. The amount of digital information generated every hour of every day exceeds all the digital data that was created up to and including the year 2000.

By contrast, many areas of daily life have changed little over the course of a generation. The most technologically advanced item in the average kitchen is the microwave oven, first marketed to households in the 1970s. Air travel reached its peak of speed with the introduction of the Concorde in 1973; it was withdrawn from service in 2003.

Every now and then, some new advance revolutionises a previously stagnant activity. The typical passenger car today is only marginally different from the models of twenty or even fifty years ago. It has smarter electronics and improved safety systems, but the experience of driving and the basic technology of the internal combustion engine are the same. Over the past decade, though, we have seen the arrival of electric cars and then of autonomous vehicles. While the future remains unclear, it seems certain that road transport will change radically over the next twenty years, and even more so over the next fifty.

Not all the new arrivals are beneficent. In 2062: The World that AI Made, Toby Walsh points to the alarming possibilities raised by autonomous weapons, of which armed drones like the Predator represent the first wave. The drone itself contains nothing fundamentally new — it’s a pilotless aircraft, equipped with cameras and missiles, that can fly for hours. The big developments are in the telecommunications systems that allow controllers on the other side of the planet to view the camera output in real time and order the firing of the missiles at any target that they choose.

At present these controllers are human, error-prone but capable of making moral choices in real time. But the development of pattern-recognition technology is such that it is already feasible to replace the human controllers with an automated control system programmed to fire when preset criteria are identified. The point at which moral choices are made, explicitly or otherwise, is in the setting of the criteria and the programming of the control system.

Further off, but by no means inconceivable, are systems whose criteria for targeting (for example, “fire on vehicles containing armed men”) are replaced by higher-level objectives. Such an objective might be “fire when the result will be a net saving of lives” or, more probably, “fire when the result will be a net saving of lives on our side.” In this case, in effect, the machines are being give moral principles and ordered to follow them.

These possibilities are alarming enough that Walsh, a professor of artificial intelligence at the University of New South Wales, and some of his colleagues organised an open letter calling on the United Nations to ban offensive autonomous weapons. The letter rapidly attracted 2000 signatures and started a process that may ultimately lead to a new international convention. As the history of disarmament proposals has shown, though, the resistance to any restriction on lethal technology is always formidable and usually successful.

The theme of human choice is developed further in Ellen Broad’s Made by Humans, an excellent analysis of the way the magical character of AI hides built-in human biases. Among Broad’s central observations is the fact that the word “algorithm” is being used in a different way, something I hadn’t noticed until she pointed it out.

For the last thousand years or so, an algorithm (derived from the name of an Arab mathematician, al-Khwarizmi) has had a pretty clear meaning — namely, it is a well-defined formal procedure for deriving a verifiable solution to a mathematical problem. The standard example, Euclid’s algorithm for finding the greatest common divisor of two numbers, goes back to 300 BCE. There are algorithms for sorting lists, for maximising the value of a function, and so on.

As their long history indicates, algorithms can be applied by humans. But humans can only handle algorithmic processes up to a certain scale. The invention of computers made human limits irrelevant; indeed, the mechanical nature of the task made solving algorithms an ideal task for computers. On the other hand, the hope of many early AI researchers that computers would be able to develop and improve their own algorithms has so far proved almost entirely illusory.

Why, then, are we suddenly hearing so much about “AI algorithms”? The answer is that the meaning of the term “algorithm” has changed. A typical example, says Broad, is the use of an “algorithm” to predict the chance that someone convicted of a crime will reoffend, drawing on data about their characteristics and those of the previous crime. The “algorithm” turns out to over-predict reoffending by blacks relative to whites.

Social scientists have been working on problems like these for decades, with varying degrees of success. Until very recently, though, predictive systems of this kind would have been called “models.” The archetypal examples — the first econometric models used in Keynesian macroeconomics in the 1960s, and “global systems” models like that of the Club of Rome in the 1970s — illustrate many of the pitfalls.

A vast body of statistical work has developed around models like these, probing the validity or otherwise of the predictions they yield, and a great many sources of error have been found. Model estimation can go wrong because causal relationships are misspecified (as every budding statistician learns, correlation does not imply causation), because crucial variables are omitted, or because models are “over-fitted” to a limited set of data.

Broad’s book suggests that the developers of AI “algorithms” have made all of these errors anew. Asthmatic patients are classified as being at low risk for pneumonia when in fact their good outcomes on that measure are due to more intensive treatment. Models that are supposed to predict sexual orientation from a photograph work by finding non-causative correlations, such as the angle from which the shot is taken. Designers fail to consider elementary distinctions, such as those between “false positives” and “false negatives.” As with autonomous weapons, moral choices are made in the design and use of computer models. The more these choices are hidden behind a veneer of objectivity, the more likely they are to reinforce existing social structures and inequalities.

The superstitious reverence with which computer “models” were regarded when they first appeared has been replaced by (sometimes excessive) scepticism. Practitioners now understand that models provide a useful way of clarifying our assumptions and deriving their implications, but not a guaranteed path to truth. These lessons will need to be relearned as we deal with AI.

Broad makes a compelling case that AI techniques can obscure human agency but not replace it. Decisions nominally made by AI algorithms inevitably reflect the choices made by their designers. Whether those choices are the result of careful reflection, or of unthinking prejudice, is up to us.

Beyond specific applications of AI, the technological progress it generates will have effects throughout the economy. Unfortunately — as happened during earlier rounds of concern about technology — the discussion has for the most part been reduced to the question, “Will a robot take my job?” Walsh and Broad both point to the simplistic nature of this reasoning.

A more comprehensive assessment of the economic and political implications of AI comes in Tim Dunlop’s The Future of Everything. (Disclosure: I’ve long admired Dunlop’s work, and I wrote an endorsement of this book.) Rather than focusing on AI, Dunlop is reacting to the intertwined effects of technological change and the dominant economic policies of the past few decades, commonly referred to as neoliberalism or, in Australia, economic rationalism.

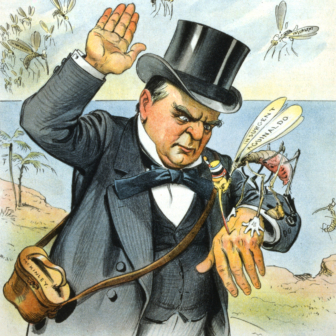

The key problem is not that jobs will be automated out of existence. In a system dominated by the interests of capital, the real risk is that technological change will further concentrate wealth and power in the hands of the dominant elite often referred to as the 1 per cent. As Dunlop says, radical responses are needed.

The most obvious is a reduction in working hours. This has been one of the central demands of the working class since the nineteenth-century campaign for an eight-hour working day. After a century of steady progress, the trend towards shorter working hours halted, and even to some extent reversed, in the 1970s. The four decades of technological progress since then have produced no significant movement.

This is a striking illustration of the fallacy of technological determinism. Under different political and economic conditions, information and communications technology could already be providing us with the leisured life envisioned by futurists of the 1950s and 1960s. Instead, it has become a tool for keeping us tethered to the office on a 24/7/365 basis.

Closely related is the question of flexible working hours. As Dunlop observes, “flexibility” is an ambiguous term. Advocates of workplace reform praise flexibility, but what they mean is top-down flexibility, the ability of managers to control the lives of workers with as few constraints as possible. Bottom-up flexibility, the ability of workers to control their own lives, is directly opposed to this. To put it in the language of game theory, flexibility is (most of the time) a zero-sum commodity.

More radical ideas include treating data as labour and moving to collective ownership of technology. Some of the most valuable companies in the world today, including Facebook and Alphabet (owner of Google), rely almost entirely on data generated by users of the internet. “We are all working for these tech companies for free by providing our data to them in a way that allows them to hide our contribution while benefiting immensely from it,” writes Dunlop. “It is way past time that we were paid for this hidden labour, potentially using that income to offset reductions in our formal working hours.”

Dunlop suggests that taxes on the profits of tech companies could be used to finance a universal basic income, which would provide everyone with an income sufficient to live on, whether or not they were engaged in paid work.

The collective ownership of technology sounds radical, but it is, in many respects, an extension of that same argument. Increasingly, technology is embodied not in large pieces of equipment, like blast furnaces or car factories, but in information: computer code, data sets and the protocols that integrate the two. As Stewart Brand observed back in 1984, information wants to be free. In the absence of legal restrictions or secrecy, that is, a piece of information can be replicated indefinitely, without interfering with the access of those who already have it. As the cost of communications and storage drops, so does the cost of replicating and transmitting information.

Of course, there are many reasons, such as privacy, why we might want to restrict access to information. But concerns about privacy have been largely disregarded under neoliberal policies. On the other hand, strenuous efforts have been made to protect and extend “intellectual property,” the right to own information and prevent others from using it without permission. These rights, supposedly given as a reward to inventors and creators, almost invariably end up in the hands of corporations.

From this perspective, longstanding demands for workplace democracy and worker control are merging with the critique of intellectual property largely driven by technical professionals. For these workers, the realities of the information age are incompatible with the thinking behind intellectual property. As Dunlop says, worker ownership is “another way of changing how we think about technology… not just a means to a fairer society, but a demand that fundamentally changes how we understand the creation and distribution of work and wealth.”

There’s a lot more in these books, and particularly Dunlop’s, than can be covered in a brief review. Each provides useful correctives to the lazy thinking about job-stealing robots and infallible algorithms that dominates much of our public discussion. And all centre on the same basic point: while technology has its own logic, the way technology is used is a matter of choice.

The key question is: who gets to make those choices? Under current conditions, they will be made by and for a wealthy few. The only way to democratise choice about technology is to make society as a whole more democratic and equal. •